In a previous article, I wrote about the trend to elevate Artificial Intelligence (AI) to the level of a deity, with a religious-like faith in its capacities to improve humanity's condition. A recent model designed to prove how AI could even write its own scriptures, however, testifies to the flawed understandings of religion on which these comparisons are made.

The website in question, god.iv.ai, was developed by Vince Lynch, founder of IV.AI, to promote the comparisons he drew between organised religion and Artificial Intelligence. The basic AI model, having had verses inputted from the KJV translation, generates new versions which one journalist described as 'eerily similar' to the original.

But when I gave it a whirl, the results were decidedly nonsensical. Some were let down by the poor grammar – for example: 'And the fourth day' – while others were let down by their incoherent content: 'The sceptre shall not be known in the valley, and found mandrakes in the neck of thine own bowels shall be well with thee, and make ready; for these men shall dine with me in time to buy food.' Of course, since it is a random generator, keep clicking 'refresh' and you might get one which could pass muster, like: 'And Reu lived after he begat Peleg four hundred and five years, and begat Terah'. Good old geneaologies.

But even if all the lines were internally coherent, what would that actually mean?

'Mean' is, in fact, the pertinent word. Because the software may have put words together, but it has not created meaning, which depends on there being a mind behind the text. Meaning has to be intended, and that requires imagination, a fundamental deficit of AI. If the model proves anything, it is that Lynch does not recognise the Bible as a living text, but more like an arbitrary collection of phrases.

He told VentureBeat: 'Teaching humans about religious education is similar to the way we teach knowledge to machines: repetition of many examples that are versions of a concept you want the machine to learn'. He added: 'The concept of teaching a machine to learn...and then teaching it to teach... [or write AI] isn't so different from the concept of a holy trinity or a being achieving enlightenment after many lessons learned with varying levels of success and failure.'

No, Lynch didn't just stumble upon a new analogy to describe the Trinity which supersedes the classic egg example. Imagining that God the Father uploaded his knowledge to God the Son who in turn uploaded it to God the Spirit, and that this resembles the stages of enlightenment in religions like Buddhism, lies somewhere between misguided and heretical, depending on your perspective. Not only is the relationship between the persons of the Trinity mischaracterised, but so is the way in which God knows, and in which we know God.

As created beings, we cannot fathom the way that God, the Creator, thinks and knows. His mind is not like a human brain only bigger, with say a better grasp of geometry and an unfailing memory. Nor, contrary to Lynch's description, do we learn about God through tedious rote-learning. We learn through experience, trial and error, seeing God's faithfulness in action and, ultimately, by his Spirit and grace.

This is so because religion is not merely a matter of propositional knowledge, which could be churned out by a random generator. It involves the body: smells, sights and sounds may be an undervalued part of our religious experience – especially within Protestant Christianity – but they are nevertheless fundamental. After all, part of the meaning of the Incarnation is that God's knowledge becomes experiential: He knows not just the scientific theory of hunger and fatigue, for example, or the psychology behind loneliness, but has experienced them in the body of Jesus.

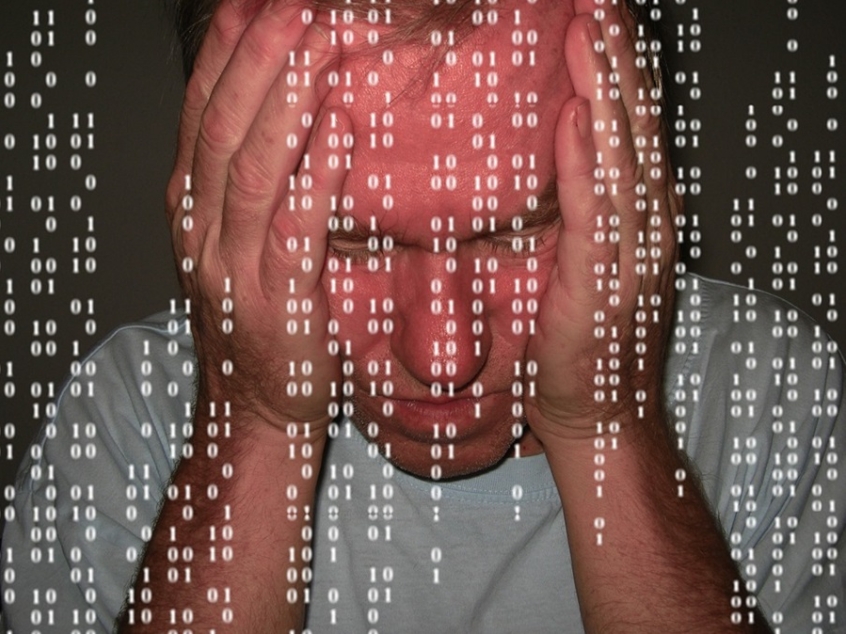

By comparison, the substitute guide to the good life that AI could provide sounds markedly cold and impersonal. Robbee Minicola, head of Wunderman AI Services, describes its potential thus: '[For a Christian] one kind of large data asset pertaining to God is the Old and New Testament. So, in terms of expressing machine learning algorithms over the Christian Bible to ascertain communicable insights on "what God would do" or "what God would say" – you might just be onto something here. In terms of extending what God would do way back then to what God would do today – you may also have something there.'

This presents a vision of God as the owner of the ultimate data set, rather than as a relational being who speaks in narrative, parable and poetry rather than mathematical equations or code. The Bible is revelation from outside human experience, not 'life-hacks' deduced from empirical research or life experience. And neither is following God's will about doing what is most rational given the data available. After all, Paul described the Cross as 'a stumbling block to Jews and foolishness to Gentiles' (1 Corinthians 1:23). AI may be effective at processing data but it cannot be trusted to act ethically: it does not know good from evil, has no conscience, and is inscribed with all the prejudices of its makers.

Show me an AI that knows the innermost hearts of every human that has ever lived, that has the power to bring good out of evil, delights in showing mercy, and is love in its very core, and you'll have come close to finding me a substitute to the God I worship. Until then, everything is cheap imitation.